|

Rate this software: 5.0.TIP: If you want to quick start with OpenVINO™ toolkit, you can use the OpenVINO™ Deep Learning Workbench (DL Workbench). If you have access to the Internet through the proxy server only, please make sure that it is configured in your OS environment.Fully detect and update all your old drivers for graphics, USB, audio, display, network, printer, mouse, keyboard and scanner. An internet connection is required to follow the steps in this guide. Systems tested with WPA2 Wi-Fi network connection while running on battery power, with display brightness set to 12 clicks from bottom or 75. The Intel® Distribution of OpenVINO™ is supported on macOS* 10.15.x versions.Testing conducted by Apple in October 2020 on production 1.4GHz quad-core Intel Core i5-based 13-inch MacBook Pro systems with 8GB RAM, 256GB SSD, and prerelease macOS Big Sur.Includes optimized calls for computer vision standards including OpenCV*The following components are installed by default: ComponentThis tool imports, converts, and optimizes models, which were trained in popular frameworks, to a format usable by Intel tools, especially the Inference Engine.Popular frameworks include Caffe*, TensorFlow*, MXNet*, and ONNX*.This is the engine that runs a deep learning model. Speeds time-to-market via an easy-to-use library of computer vision functions and pre-optimized kernels Supports heterogeneous execution across Intel® CPU and Intel® Neural Compute Stick 2 with Intel® Movidius™ VPUs Enables CNN-based deep learning inference on the edge Based on Convolutional Neural Networks (CNN), the toolkit extends computer vision (CV) workloads across Intel® hardware, maximizing performance.The Intel® Distribution of OpenVINO™ toolkit for macOS*: IntroductionThe Intel® Distribution of OpenVINO™ toolkit quickly deploys applications and solutions that emulate human vision.Add /Applications/CMake.app/Contents/bin to path (for default install) Install (choose "macOS 10.13 or later") Intel® Xeon® Scalable processor (formerly Skylake and Cascade Lake) 3rd generation Intel® Xeon® Scalable processor (formerly code named Cooper Lake) 6th to 11th generation Intel® Core™ processors and Intel® Xeon® processors

Intel Detection Tool How To Install TheInstall the Intel® Distribution of OpenVINO™ Toolkit. (Optional) Apple Xcode* IDE (not required for OpenVINO, but useful for development)This guide provides step-by-step instructions on how to install the Intel® Distribution of OpenVINO™ 2020.1 toolkit for macOS*. In the terminal, run xcode-select -install from any directory

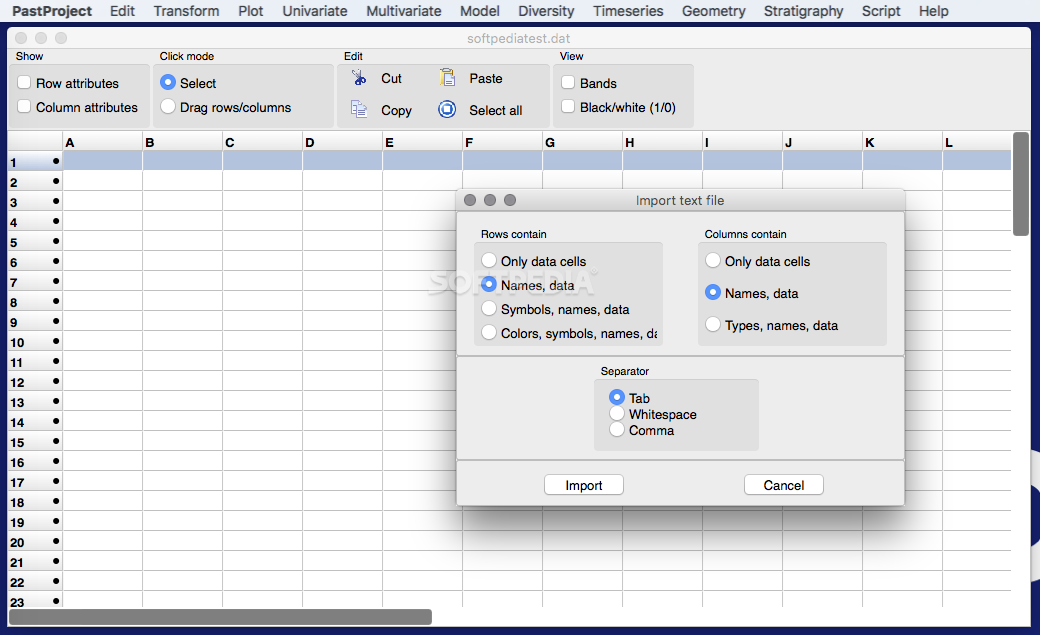

By default, the disk image file is saved as m_openvino_toolkit_p_.dmg. This document assumes this is your Downloads directory. Go to the directory in which you downloaded the Intel® Distribution of OpenVINO™ toolkit. Uninstall the Intel® Distribution of OpenVINO™ Toolkit.Install the Intel® Distribution of OpenVINO™ Toolkit Core ComponentsIf you have a previous version of the Intel® Distribution of OpenVINO™ toolkit installed, rename or delete these two directories:Download the latest version of OpenVINO toolkit for macOS* then return to this guide to proceed with the installation.Install the OpenVINO toolkit core components: Get Started with Code Samples and Demo Applications. Can i buy perputual license for outlook for macAfter installing the Intel® Distribution of OpenVINO™ toolkit core components, you will need to install the missing dependencies. Take note of any dependencies you are missing. If you are missing external dependencies, you will see a warning screen. Click Next and follow the instructions on your screen. Run the installation wizard application m_openvino_toolkit_p_.appOn the User Selection screen, choose a user account for the installation:The default installation directory path depends on the privileges you choose for the installation. The disk image is mounted to /Volumes/m_openvino_toolkit_p_ and automatically opened in a separate window. If needed, click Customize to change the installation directory or the components you want to install:NOTE: If there is an OpenVINO™ toolkit version previously installed on your system, the installer will use the same destination directory for next installations. For regular users: /home//intel/openvino_/For simplicity, a symbolic link to the latest installation is also created: /home//intel/openvino_2021/. For root or administrator: /opt/intel/openvino_/

You will see OpenVINO environment initialized.The environment variables are set. To verify your change, open a new terminal. Save and close the file: press the Esc key, type :wq and press the Enter key. Open the macOS Terminal* or a command-line interface shell you prefer and run the following script to temporarily set your environment variables:Source /opt/intel/openvino_2021/bin/setupvars.shIf you didn't choose the default installation option, replace /opt/intel/openvino_2021 with your directory. If you received a message that you were missing external software dependencies, listed under Software Requirements at the top of this guide, you need to install them now before continuing on to the next section.You need to update several environment variables before you can compile and run OpenVINO™ applications. Amitabh bachchan songs download free zip fileWhen you run a pre-trained model through the Model Optimizer, your output is an Intermediate Representation (IR) of the network. You cannot perform inference on your trained model without running the model through the Model Optimizer. Configure the Model OptimizerThe Model Optimizer is a Python*-based command line tool for importing trained models from popular deep learning frameworks such as Caffe*, TensorFlow*, Apache MXNet*, ONNX* and Kaldi*.The Model Optimizer is a key component of the OpenVINO toolkit. If you see error messages, verify that you installed all dependencies listed under Software Requirements at the top of this guide.NOTE: If you installed OpenVINO to a non-default installation directory, replace /opt/intel/ with the directory where you installed the software.Option 1: Configure the Model Optimizer for all supported frameworks at the same time: Choose the option that best suits your needs. Model Optimizer Configuration StepsYou can choose to either configure the Model Optimizer for all supported frameworks at once, OR for one framework at a time. Bin: Contains the weights and biases binary dataThe Inference Engine reads, loads, and infers the IR files, using a common API on the CPU hardware.For more information about the Model Optimizer, see the Model Optimizer Developer Guide. Get Started Guide for macOS to learn the basic OpenVINO™ toolkit workflow and run code samples and demo applications with pre-trained models on different inference devices. To continue, see the following pages: Get StartedNow you are ready to get started. Proceed to the Get Started to get started with running code samples and demo applications.

0 Comments

Leave a Reply. |

AuthorJessica ArchivesCategories |

RSS Feed

RSS Feed